How To Set Up An Effective Video Data Labeling Workflow

Apr 10, 2024

From self-driving cars on city streets to tracking systems seeking security threats, AI’s use in analyzing video data is broad and diverse. However, all these possibilities must be unlocked with accurate and efficient video data labelling. Let’s explore the steps, examples, and reasons for creating an effective video data labelling process workflow.

Steps to Establish an Effective Workflow

Define Your Objectives

The foundation of any video data labelling project lies in its objectives. These objectives should be specific, measurable, and aligned with the end goals of the AI application. For instance, if the project involves an autonomous driving system, the objective might be to recognize pedestrian movements across various urban and rural settings accurately. Alternatively, a security application might focus on detecting suspicious activities under different lighting conditions and environments. Clearly defined objectives guide the labelling process, ensuring that every annotated video directly contributes to the model’s ability to achieve its intended function. This step requires a thorough understanding of the application’s requirements and the potential challenges the AI model may face in real-world scenarios.

Curate Your Dataset

The dataset’s diversity and quality directly influence the AI model’s performance. Curating a dataset involves collecting video footage encompassing the broad spectrum of scenarios the AI model will encounter. This includes variations in environmental conditions, subject diversity, and event complexity. A well-curated dataset enhances the model’s accuracy and robustness and ensures equitable performance across different demographics and situations. This meticulous process involves identifying and sourcing relevant video content, which can be challenging but is critical for overcoming the limitations of current AI technologies and paving the way for more advanced and inclusive AI applications.

Annotation Guidelines

Clear and comprehensive annotation guidelines are essential for maintaining consistency and quality across the dataset. These guidelines should detail the categories to annotate, the techniques used, and how to address ambiguous or complex scenarios. Well-defined guidelines ensure that all annotators have a common understanding of the task, leading to uniform annotations across the dataset. This step often involves collaboration between domain experts, data scientists, and the annotation team to cover all necessary details and scenarios the AI model may encounter.

Training Your Annotators

Training annotators is crucial for achieving high-quality annotations. This training should cover the annotation guidelines, tools, and any specific challenges related to the video data. Practical training sessions give annotators the skills and knowledge to perform their tasks accurately and efficiently. They also offer an opportunity to address any questions or uncertainties, ensuring annotators are fully prepared before they begin labelling. Ongoing support and refresher training can help maintain high standards throughout the annotation project.

Quality Control

Quality control mechanisms are necessary to ensure the accuracy and reliability of the annotated data. This may involve spot checks, where a sample of annotations is reviewed for accuracy, or consensus labelling, where multiple annotators label the same videos to ensure consistency. Quality control helps identify and correct errors or inconsistencies, which is vital for training reliable AI models. A systematic quality control process can significantly reduce the risk of bias and errors in the dataset.

Iterate and Optimize

A compelling video data labelling workflow is dynamic but evolves based on feedback and performance analysis. Regularly reviewing the workflow allows for identifying bottlenecks, inefficiencies, or areas where the guidelines may need refinement. Feedback from annotators who are directly engaged with the labelling process can provide valuable insights into potential improvements. Iterating and optimizing the workflow based on this feedback and performance metrics ensures the process remains efficient, effective, and aligned with the project’s objectives.

Examples and Tips for Effective Video Data Labeling

When possible, automated object tracking tools should be used when working on projects with moving objects, such as vehicles or people. These tools save a lot of time since they automatically tag object annotations across multiple frames after annotating them in one frame.

Use platforms that provide collaborative annotation. Such video datasets should be able to be annotated by multiple annotators simultaneously, which can dramatically speed up the annotation process.

One of the features of incremental training is the possibility to use partially annotated datasets. It allows one to start the training even when annotation is incomplete, hence receiving feedback on model performance at an earlier stage and raising potential data issues.

Why an Effective Video Data Labeling Workflow is Crucial

Accuracy and Efficiency

When structured meticulously, a compelling video data labelling workflow dramatically enhances the labelling process’s accuracy and efficiency. This precision is crucial because even minor inaccuracies in labelling can lead to significant errors in AI model predictions. Efficiency, conversely, ensures that large volumes of video data can be processed and labelled within reasonable timeframes, thus accelerating the development cycle of AI models. Together, these factors contribute significantly to the overall performance of the trained AI model, enabling it to interpret and interact with the visual world more reliably and effectively. Implementing automation, such as using pre-trained models for preliminary tagging or adopting tools that facilitate easier label management, can further streamline workflows and minimize human error.

Scalability

As AI projects progress, they often require more extensive and diverse datasets to improve model robustness and adapt to broader scenarios. A compelling video data labelling workflow is inherently scalable and designed to accommodate the expanding scope of data without a proportional increase in complexity or resource requirement. This scalability is achieved through automation, efficient data management practices, and a clear organizational structure for the labelling process. By preparing for scalability from the outset, organizations can ensure their labelling workflows grow with their AI projects, facilitating continuous improvement and expansion of AI capabilities.

Cost-Effectiveness

Efficiency in the video data labelling process translates directly to cost savings. By optimizing the workflow, organizations can reduce the hours needed for manual labelling and rework due to errors, thereby lowering the overall costs of preparing datasets for AI training. Cost-effectiveness also stems from minimizing the need for extensive corrections and quality checks downstream, as a well-structured workflow includes built-in mechanisms for ensuring data quality from the start. Investing in training for annotators and selecting appropriate tools can have an upfront cost but pays dividends in reducing long-term operational expenses.

Model Performance

The ultimate goal of any AI development project is to create models that perform their intended tasks reliably under various conditions. The quality of labelling video data is a critical determinant of an AI model’s learning quality and ability to make correct predictions. High-quality annotations serve as accurate references that the model uses to learn about the world it will operate in, directly influencing its effectiveness and reliability. Therefore, establishing an even more process-driven workflow for video data labelling is imperative for achieving high-performance AI applications. This involves meticulous attention to detail in the labelling process and continuous evaluation and refinement of the workflow to ensure the highest quality of data annotation.

With just a basic understanding of the video data labelling workflow process, examples, and tips above, equipping oneself with confidence ensures that the process of video data labelling is done efficiently and accurately. This foundational work is critical for training AI models to interpret complex video inputs, enabling the development of advanced AI applications that can see, understand, and interact with the world in ways that fundamentally transform human society.

Today’s article focused on the main aspects of video data labelling and preached the value of adopting a structured approach. We hope it has provided meaningful insights to our loyal readers who want to improve their AI projects through more effective workflows for video data labelling.

Recent articles

Generative AI summit 2024

Generative AI summit 2024

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: AI Logistics Control

Client Case Study: AI Logistics Control

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Product Classification

Client Case Study: Product Classification

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Virtual Apparels Try-On

Client Case Study: Virtual Apparels Try-On

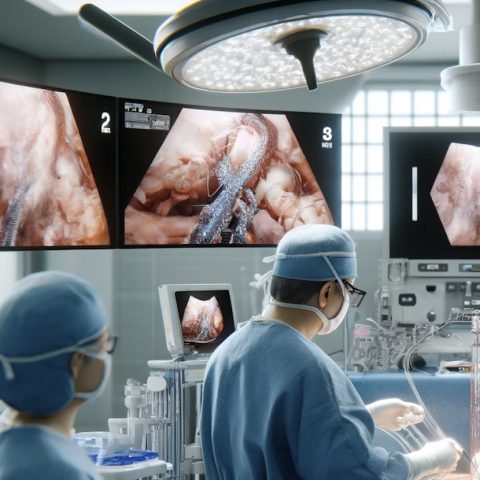

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: Drone Intelligent Management

Client Case Study: Drone Intelligent Management

Client Case Study: Query-item matching for database management

Client Case Study: Query-item matching for database management