How To Build a Successful Data Labeling Operations

Apr 2, 2024

Data labelling is critical to developing robust AI applications, particularly image and speech recognition. It involves human annotation of data to train machine-learning models. The success of AI projects can hinge on the quality and consistency of the labelled data. This article aims to give some general information about data labelling, showing its importance and impact on AI applications.

What is Data Labeling?

In its essence, data labelling is “the core element to AI and machine learning.” It covers knowledge annotation and categorization, further clarifying algorithms and models.

Therefore, data labelling refers to adding descriptive tags or informative metadata to raw data. More basically, the activity whereby relevant and correct annotations are added to the elements within the data essentially labels or qualifies them in an informative manner using specific attributes or pieces of knowledge the algorithms can use for later identification of patterns, predictions, and completion of different jobs.

Well-labeled and accurate data is imperative for the success of most AI and machine learning projects. Unlabeled data cannot allow algorithms to understand and interpret the data, making them present inaccuracies. Data labelling fills that vacancy between raw data and AI models, as it may be used to inform the said model of the right decision that needs to be made.

A Few Use Cases of Data Labeling

Some applications associated with data labelling include computer vision, natural language processing (NLP), speech recognition, and autonomous vehicles. The demands for accurate labelling mainly stem from these typical applications:

Computer Vision: Object labelling, bounding boxes, facial recognition, image segmentation, and object tracking. You can check out our blog on instance vs semantic analysis.

Natural Language Processing: Name entity recognition, part-of-speech tagging, sentiment analysis, and text classification.

Speech Recognition: Transcription, Speaker Identification, Emotion Detection, and Language Model.

Successful data labelling operations need a lot of planning, decision-making, and resource allocation. This section shall cover the basic requirements for a strong foundation for your label data operations.

Selection of the Right Tools for Data Labeling

Once the correct and appropriate data needs to be labelled, selecting the proper set of tools becomes an important decision for the efficiency and accuracy of work. Some critical factors to be considered while choosing data labelling tools are:

Types of Annotation: As needed in your project, this may include bounding boxes, polygons, key points, or semantic segmentation. For a deeper dive into how these annotations can be applied to your AI projects, explore our comprehensive guide on AI data annotations.

Tool features: Decide on the tool’s features, such as reviewing previously labelled data, collaboration, versioning, and integration with other workflows. Here is the list of the top 10 open source data labeling tools for computer vision.

UI and UX: Guarantee that the label tools offer a comfortable interface with users who would assist the annotators in making the correct decisions when required.

Scalability: Check how scalable it is to allow scaling with increased data and whether it supports the requirement of multiple annotators simultaneously.

Customizability: Check whether the tool is customizable regarding the annotation templates and offers solutions to domain-specific requirements.

Cost: Consider going through the pricing and licensing categorization of the labelling tools to match them with your budget and project preferences.

Building Your Data Labeling Team

Curating a proficient and dedicated data labelling team is one of the significant initial steps toward setting up your operations. The following aspects can be kept in mind while building a team:

Expertise: Seek individuals with expertise in the specific annotation tasks your project will require, including object detection, text classification, and audio transcription.

Training: Labeling team members should undergo thorough training to fully understand the annotation guidelines, quality standards, and project objectives.

Quality Control: The team should have dedicated quality control members whose work is to ensure the correctness and uniformity of annotations.

Scalability: Design hiring and training pipelines so that onboarding new members becomes a well-practiced process as the volume to label increases and more labelling tasks are created.

Communication and Coordination: Provide effective communication channels for all users in case a question or issue arises from any labeller’s side while performing their activity.

Why Quality Assurance Matters for Data Labeling Operations

Quality assurance in data labelling operations is essential for maintaining the authenticity of the annotations. In this regard, we would argue for the importance of quality assurance and how high standards can be maintained when regular data labelling is carried out. The quality of these annotations directly shapes the accuracy and performance of AI models. Any inconsistent or inaccurate labelling may result in skewed training data, affecting poor generalized patterns by AI.

The high-quality labelled data provided to customers or stakeholders is a confidence-building measure in the AI system, helping customer satisfaction.

Ways of Ensuring Data Quality

Some ways of ensuring quality data in your labelling operations include:

Annotation Guidelines: Clearly define the annotation guidelines, which shall help explain the standards relating to labelling, types, and particulars of tasks. The annotation guidelines provided to the annotators should be clear and concise and leave no room for uncertainty regarding the required information needed for annotation.

Training and Feedback Loops: Annotators should undergo training from the beginning. Very detailed annotation guidelines and associated examples should accompany the training. A feedback loop can be in place for any questions to be answered, clarifications to be provided, or rapid fixing of errors involving any labelling.

Inter-Annotator Agreement (IAA): Implement standardized measures of IAA for any dataset so that multiple annotators are studying the same samples, hence trying to identify discrepancies and offer an understanding of annotation quality. These help one measure agreement through techniques like Fleiss’ or Cohen’s kappa.

Regular Audit and Review of Results: Audits should be routinely performed on the annotated data so that any inconsistencies or errors can be rectified or identified. In this respect, choose part of the annotations generated by the frontline workers and track it over time to monitor, evaluate, and provide feedback to the frontline workers.

Iterative Refinement: Include a mechanism for iterative review and refinement in initial annotations. This process facilitates the correction of any inconsistencies or errors and ensures an increase in the quality of labelled data.

Monitoring and Continuous Improvements

Maintaining high-grade quality in data labelling operations requires continuous monitoring and ensuring that efforts to improve can always be constructed. These include metrics and performance-tracking elements:

Here, the key metrics that help assess the quality of labels regarding specific parameters, such as accuracy, precision, recall, or F1 score, need to be defined. They should be continuously tracked to capture trends, emerging issues, or constant room for improvement.

Regular feedback and communication: Maintain an open line of communication with the labelling team so that they can provide input on guidelines, tools, or any problems that they find. Take prompt steps to smooth out their efficiency and quality improvement concerns when possible.

Performance incentives: Since annotators work in their free time from anywhere worldwide, their performance can be rewarded, boosting their morale and inculcating quality standards. The incentive package may include add-on incentives for those continuously providing accurate annotations.

With proper quality assurance and constant process monitoring and improvement, you can ensure that all your labelling operations will be accurate and reliable.

When and How to Scale

In addition to this evolving data labelling landscape, scaling operations to meet the growing demand for labelled data must be achieved without sacrificing efficiency and quality. This section covers the essential strategies you should apply when scaling your data-labeling operation.

Deciding when to scale your data labelling operations ensures smooth growth and should include the following:

Workload: Assess how much data needs to be labelled and whether your in-house team can manage the workload perfectly. If the latter is overbearing or encourages bottlenecks, it indicates that scaling is necessary.

Time Restriction: Assess if your team has enough time to meet the deadlines set for data labelling. If the timelines are consistently tightly packed, scaling may be necessary for timely delivery.

Business Growth: Consider the growth trajectory of your AI projects and the increasing demand for labelled data. If there is a clear upward trend, scaling becomes essential to keep up with the growing needs.

Challenges in Scaling and How to Overcome Them

Scaling data labelling operations can present various challenges. Here are some common challenges and strategies to overcome them:

Staffing and Training (This may be for hiring, training, payroll, and more): Finding capable annotators and training them accordingly is difficult enough. Develop an efficient pipeline, provide solid training documentation, and organize ongoing mentorship.

Workflow Streamlining: As your labelling tasks increase in volume, so does the importance of workflow optimization. Define streamlined workflow processes, incorporate automation of repetitive tasks to the furthest extent possible, and utilize various online or software-based project management and tracking systems.

Quality Control: As the scale of operations increases, quality control becomes progressively problematic. Introduce stringent quality control measures and more regular audits, increase efforts to maintain consistency, and conduct many more assessments for inter-annotator agreement.

Infrastructure and Tools: Scaling needs must be factored into infrastructure enhancements, and more advanced or robust labelling tools must be added. Continually remain vigilant about taking stock of and reassessing the tool stack and infrastructure to ensure they are evolving to provide a foundation for continuing to meet the increased demands of such systems.

Security and Privacy in Data Labeling Operations

Most important is the issue of maintaining security and privacy during data labelling operations, which will protect sensitive information and ensure compliance with data protection regulations. This section deals with critical pointers and best practices for maintaining security and privacy in your data labelling operations.

Significance of Security and Privacy

Data labelling operations often involve handling sensitive data, such as personally identifiable information (PII), medical records, or financial information. Failing to maintain security and privacy can lead to severe consequences, including data breaches, legal liabilities, and damage to your organization’s reputation. It is crucial to prioritize data security and privacy throughout the labelling process.

Best Practices for Ensuring Security and Privacy

To keep up with the maintenance of security and privacy concerning your data labelling operations, adapt and undertake the best practices that follow herein:

Data Access Controls: Tightly access your sensitive data with strict access controls. Allow authorized personnel to access it only when it is required for the labelling job.

Secure infrastructure: Ensure the safety of your labelling infrastructure, which includes servers, databases, and labelling tools. Update software regularly with new patches and encrypt your data in transit and at rest.

Anonymizing and Pseudonymizing: Wherever possible, anonymize or pseudonymize data before it is sent to the annotators. This helps protect individuals’ privacy and reduces the risk of data breaches.

All annotators and team members must sign a confidentiality agreement so that they know what responsibilities and precautions need to be taken for security and privacy. This will ensure that they realize the importance of the given task confidentially and that they must proceed under the required protocols.

Secure communication mechanism: While conveying information between team members, clients, and third-party vendors associated with the data, use secure channels which can be accessed only by respective personnel.

Data Retention and Disposal: Provide apparent data retention and disposal policies. Define clearly the period within which the data will be retained after labelling is completed and establish a means of properly disposing of it when it is no longer used.

Dealing with sensitive data

When dealing with sensitive data, bear in mind the following additional measures:

Data Minimization: All sensitive data collection and storage shall be data minimized to the least amount needed for labelling. In no form will there be an unnecessary application of retention.

Annotator Training: Special training will be accorded to the annotators responsible for handling sensitive data. This training will cover confidentiality and data handling protocols in general.

Data sharing agreement with third-party vendors or partners: A sufficient agreement should be in place if the data is shared with third-party vendors or partners. Such an agreement must specify the responsibilities and commitments regarding safeguarding the data details and privacy.

By maintaining the most secure regime of confidentiality, operating with best practices, and minding privacy in all aspects of data labelling work, you will protect the interests of sensitive information and execute according to regulation. Finally, let’s summarize some of the key takeaways for building a successful data labelling operation.

4 Key Article Takeaways for Building a Successful Data Labeling Operation

As discussed above, data labelling operations must be carefully planned and managed for quality, scalability, and security. These steps and strategies were presented in this blog, leading you to understand better how building and managing a data labelling operation can result in innovation and excellence in AI and ML. Let’s recap the 4 key takeaways:

Explanation of Data Labeling: The process provides labelled data required to train machine learning or artificial intelligence algorithms. Mapped raw data is something legible and usable for relevant descriptive metadata attachment. Set Up Your Data Labeling Operations: With a properly drawn set of tools for your data labelling operations, skill up the labelling team and devise effective processes to give your operations a solid base.

Quality Assurance: The fundamental element in ensuring accurate annotations. To provide high-quality data labelling, develop clear guidelines, organize training, conduct periodic audits, and track performance.

Scaling Your Data Labeling Operations: Learn when and how much to scale based on your workload, time constraints, and business growth. Overcome challenges through smart hires, training, workflow optimization, and quality control.

Security and Privacy: Sensitive information should be secured and private. Provisioning access controls, secure infrastructure, anonymization, confidentiality agreements, and secure communication channels.

By following these 4 key takeaways, you can build a successful data labelling operation that ensures quality-labelled data generation, efficient scaling, and the security and privacy of sensitive information. Embrace these strategies, and you will drive your AI and machine learning projects to success. Reach out if you have any questions or needs.

Recent articles

Generative AI summit 2024

Generative AI summit 2024

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: AI Logistics Control

Client Case Study: AI Logistics Control

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Product Classification

Client Case Study: Product Classification

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Virtual Apparels Try-On

Client Case Study: Virtual Apparels Try-On

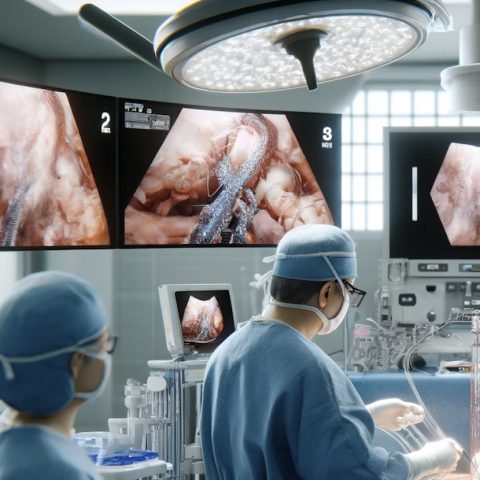

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: Drone Intelligent Management

Client Case Study: Drone Intelligent Management

Client Case Study: Query-item matching for database management

Client Case Study: Query-item matching for database management