AI Red Teaming: Ensuring Safety and Security of AI Systems

May 7, 2024

At SmartOne.ai, we are deeply aware that as AI technologies evolve and become more complex, so do the challenges and risks associated with ensuring they are used ethically and securely— ethical AI. It is an ongoing and pressing issue in AI security to adhere to ethical guidelines and protect against potential misuse. This recognition drives our commitment to rigorous testing and evaluation of AI systems, where the practice of AI Red Teaming plays an indispensable role. AI Red Teaming is essential for identifying vulnerabilities and ensuring that AI security implementations are conducted responsibly, safeguarding user data and maintaining public trust.

Grab a drink and snack as we plunge headfirst into the world of AI Red Teaming and explore its critical role in shaping the future of safe and ethical artificial intelligence. Together, we’ll discover why mastering this proactive approach is essential for anyone invested in the next generation of AI technologies.

What is AI Red Teaming?

AI Red Teaming is not a reactive measure but a proactive and comprehensive approach to cyber threats and emerging threats by evaluating AI systems’ for safety and AI security. It involves simulating potential adversarial attacks to identify and mitigate risks, ensuring AI systems’ robustness, safety, and trustworthiness. This includes rigorous work by a group of expert security professionals in generative AI threat modelling to anticipate potential threats and counteract a potential attack and misuses.

A Historical Perspective of Red Teaming

The fascinating origins of Red Teaming can be traced back to ancient military practices, where leaders would simulate attacks on their forces to identify vulnerabilities. This ancient strategy, steeped in history, has evolved over centuries, culminating in more structured forms during the 20th century.

During the Cold War, this concept became formalized. The U.S. and its allies would regularly engage in war games, where ‘red’ teams—named for their association with the red flags of the Soviet Union—would adopt the tactics and mindset of Soviet forces to challenge and improve their strategies. This historical perspective underscores the significance of Red Teaming as it has evolved into a vital tool for AI security.

In the late 20th century, Red Teaming methodologies transitioned from the battlefield to business and government arenas. Organizations applied Red Teaming to challenge their plans and operations, fostering better decisions through critical thinking and the anticipation of adversarial tactics. This has proven especially valuable in complex, high-stakes environments where the cost of failure is high, and the need for robust security measures is paramount.

Today, Red Teaming has found a new and vital domain in Artificial Intelligence. In AI security, Red Teaming is mainly focused on stress-testing generative AI models for potential harms, ranging from safety and security issues to social biases. The adaptation from traditional military practices to digital security reflects the flexibility and enduring relevance of Red Teaming as a tool for improvement and resilience.

By understanding the incredibly vital historical roots and evolution of Red Teaming, we can better appreciate its value in contemporary settings, particularly in emerging technologies like AI, where the stakes continue to grow alongside the technology’s capabilities.

The Process of AI Red Teaming

AI Red Teaming has evolved into a highly interactive and dynamic process involving human ingenuity and sophisticated AI systems. Together, they collaborate to create adversarial prompts that challenge AI models unexpectedly. These prompts are explicitly designed to test AI systems’ limits and identify vulnerabilities malicious actors could exploit.

For instance, in the context of natural language processing (NLP) models, a red team might generate textual prompts that include subtle manipulations designed to trick the AI into generating inappropriate or harmful content. This could involve using leading questions, ambiguous language, or introducing biases to see if the AI inadvertently amplifies these biases in its responses.

In image recognition and generative AI, adversarial prompts include visually altered images that confuse the AI. These can be as simple as changing the lighting and angles in an image or as complex as incorporating visually noisy elements that humans can easily ignore, but that profoundly confuse the AI’s perception algorithms. An example of this might be presenting an image of a stop sign partially obscured or defaced with stickers—while a human driver would still recognize the sign, an AI might not, leading to potential safety issues in autonomous vehicle systems.

These “red team” models are often sophisticated AI systems trained to think like attackers. They use techniques from the emerging field of adversarial machine learning , where models are trained to produce inputs that other AI systems will misinterpret. This can include generating speech that voice-controlled systems misinterpret, potentially leading to unauthorized actions in your AI workloads.

Moreover, in cybersecurity applications, AI Red Teaming might involve simulating phishing attacks where emails or messages are crafted by AI to appear incredibly realistic, testing both the AI systems designed to detect such threats and the human employees’ ability to recognize them.

These diverse prompts significantly enhance the breadth and depth of security testing, ensuring that AI systems are robust under ordinary conditions and prepared to handle unexpected AI security risks or extreme scenarios. This continuous and rigorous testing is crucial for maintaining trust in AI applications, particularly those used in critical domains like healthcare, finance, and autonomous transportation.

AI Red Teaming for Continuous Improvement

A diverse team for Red Teaming is not just a recommendation; it’s a necessity. This diversity isn’t just about varied academic and professional backgrounds and incorporating different perspectives, cognitive approaches, and problem-solving techniques. This inclusivity is crucial for identifying a wide array of potential vulnerabilities before external threats can exploit them, fostering collaboration and shared responsibility in AI security.

Best Practices for AI Red Teaming:

Diverse Team Composition: Ensure that the Red Team or security team includes members from varied disciplines such as cybersecurity, psychology, software engineering, ethics, robust intelligence, and domain-specific areas relevant to the AI application. This diversity leads to early threat prevention and more creative and comprehensive testing scenarios and helps uncover subtle, less obvious risks, further ensuring a secure AI.

Regular and Iterative Testing Cycles: AI systems and AI algorithms should be subjected to Red Teaming at regular intervals, especially after any significant updates or changes to the system. Continuous testing helps catch new vulnerabilities that may have been introduced and ensure that the AI security and defensive mechanisms are always up to date.

Simulate Real-World Scenarios: The adversarial tests must be rooted in realistic scenarios the AI might encounter in its operational environment. This includes simulating social engineering attacks for AI systems interacting with humans or creating physically plausible perturbations for vision-based systems.

Leverage Emerging AI Technology Focused On Threat Detection: Challenge AI systems using the latest available AI tool and threat intelligence techniques in adversarial machine learning. This includes employing generative adversarial networks (GANs) to create inputs that test the AI’s robustness against inputs that try to deceive it.

Transparent Reporting and Feedback Loops: When securing AI, it is crucial to report the results transparently to all stakeholders after each testing cycle. This should be accompanied by a structured feedback mechanism that ensures learnings are integrated into the AI system’s next iteration.

Ethical Considerations: Always consider the moral implications of Red Teaming methods and the potential impacts of identified vulnerabilities. Ensure testing does not compromise user privacy or security and adheres to ethical guidelines and regulations to better protect sensitive information and sensitive data, thus always ensuring responsible AI.

Invest in Skills Development: The Red Team’s skills should be continuously updated and expanded to keep pace with new adversarial tactics and advancements in AI security. Training and development are crucial to maintaining an edge in AI security.

Red Teaming is not just about finding faults but is a critical part of the developmental process that ensures AI systems and AI security can perform safely and effectively in the face of current and future threats.

Red Teaming as a Service

The future of AI holds immense promise but also presents significant security challenges. At SmartOne.ai, we are committed to leading the way in AI Red Teaming, ensuring that AI implementations are robust, ethical, and secure. Choosing a Red Teaming as a Service (RTaaS) provider like SmartOne.AI offers the advantage of external expertise essential for uncovering and mitigating hidden vulnerabilities. As your chosen provider, we simulate realistic adversarial scenarios and deliver critical insights that internal teams might overlook, thus ensuring true ai safety.

Our unique blend of AI expertise and cybersecurity experience positions us ideally to address the multifaceted challenges of AI security. From the initial assessment to ongoing protection, our risk analysis services are designed to ensure your AI applications uphold the highest standards of integrity and reliability. We test and refine your AI models and empower your team with the knowledge and tools to maintain security autonomously—Trust SmartOne.ai to be your ally in navigating the evolving landscape of AI threats and opportunities.

Recent articles

Generative AI summit 2024

Generative AI summit 2024

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: Automated Accounting for Intelligent Processing

Client Case Study: AI Logistics Control

Client Case Study: AI Logistics Control

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Inquiry Filter for a CRM Platform

Client Case Study: Product Classification

Client Case Study: Product Classification

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Interius Farms Revolutionizing Vertical Farming with AI and Robotics

Client Case Study: Virtual Apparels Try-On

Client Case Study: Virtual Apparels Try-On

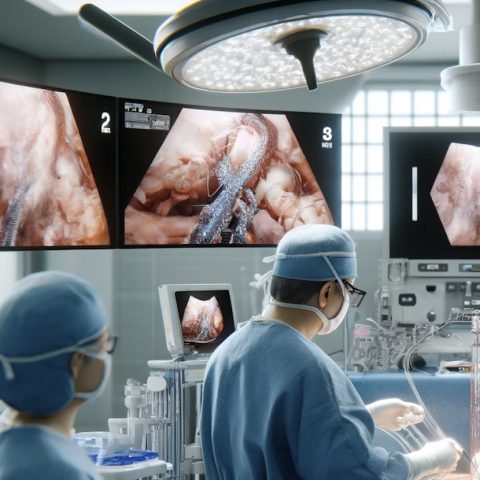

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: SURGAR Delivering Augmented Reality for Laparoscopic Surgery

Client Case Study: Drone Intelligent Management

Client Case Study: Drone Intelligent Management

Client Case Study: Query-item matching for database management

Client Case Study: Query-item matching for database management